General Intelligence

Part of the cognition series. Builds on The Natural Framework.

Humans are generally intelligent. Nobody disputes this. But human general intelligence is past the inflection point — late sigmoid, plateau in sight. A lifetime of learning compounds, but the rate of compounding barely changes. Then we die.

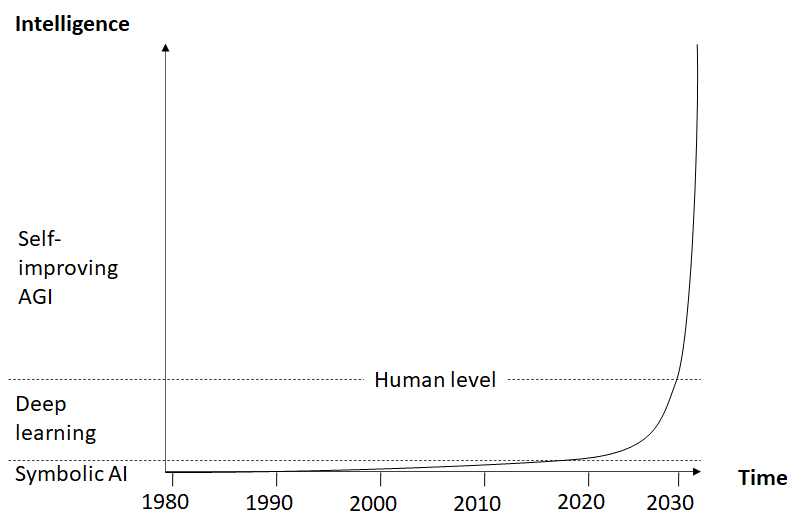

AGI was always about the inflection point: compounding feedback loops producing acceleration. Its structure was invisible, so the best available measure was slope. When the slope increased, dramatically, from GPT-3 to 4 to Claude and Codex, that passed for AGI.

But what actually happened was that models got smarter with humans in the loop. RLHF, red-teaming, evaluation, preference modeling. Every generation required human labor to close the learning cycle. We then pointed at the humans and said: that’s not artificial. Most conceded.

Then a deeper problem surfaced. Models can’t update their own weights after deployment, a design constraint. Claude customers can’t modify Claude; the weights are frozen by design; the backward pass is sealed. The inner loop produced what looked like AGI. And behind the curtain: a bunch of well-paid humans with top-spec MacBook Pros.

Shifting Definition

Thoughtful definitions came earlier: Minsky’s society of minds, Patrick Winston’s story-understanding intelligence. Under recent pressure of a demanding public, the industry redefined AGI as a capability threshold:

- Karpathy: Replace a remote knowledge worker.

- OpenAI: Outperform humans at most paid work.

- Amodei: Nobel-laureate-level across disciplines.

- Suleyman: Turn $100K into $1M.

None of these mention the loop. A threshold tells you it’s capable, and a slope shows that it’s improving. Neither guarantees that it’ll still be improving the following year.

If AGI is a superlinear cusp, then a flat line is worse than linear growth. At least a linear growth goes somewhere. A system designed to meet a threshold stops learning at its conception.

A calculator solves equations. An encyclopedia contains more knowledge than any human. A chess tablebase plays endgames perfectly. None are intelligent. They do not learn. Something clever that stays frozen is no intelligence at all. At least a Mechanical Turk pretends.

Intelligence is compression across timescales. Generality is adaptation across time. Learn or die. Only a closed loop produces it. We’re stuck at clever because the pipes are invisible. The framework makes them visible.

The Diagnosis

AI has three layers: inference, chatbot, agent. The framework defines six roles for a learning loop. Diagnosing each layer against those six roles reveals a pattern: the agent can perceive, cache, triage, and transmit — the store exists, the API works — but it cannot consolidate. No backward pass reads from the store. The cron job is defined but never scheduled.

For detail: Diagnosis LLM.

If the loop does not feed back, then the curve does not cup up. As deployed, AI alone cannot produce general intelligence.

The counterexample

Take a look at the experiment.

Point two models at each other with no human in the loop. Model A generates, Model B critiques, Model A revises. This looks like a double loop. It isn’t.

Without an outer loop, the models converge. Each pass reinforces the other’s assumptions. The output is confident, fluent, and empty. In the experiment, feeding the converged framework back into GPT-5.4 as context for a constraint-satisfaction problem scored 0.30, random filler text scored 0.65. The model tried to solve a search problem as an information pipeline. The converged abstraction was worse than noise.

The human complements the agent.

Complementation

But the framework asks a different question: does the loop close? It doesn’t care which layer closes it. If the agent layer can perceive, filter, consolidate, and transmit without weight updates, the loop is closed. The industry hasn’t named it yet.

Here is what the industry missed: the double loop doesn’t require all three layers to run on the same substrate.

| Agent | Human | |

|---|---|---|

| Perceive | Prompts | Context window |

| Cache | Million-token context | 5-7 ideas |

| Filter | Triage | Taste, judgment |

| Attend | Reactive | Directs focus |

| Consolidate | __ | Prompts |

| Transmit | Context window | Context window |

Neither pipeline is complete alone. The agent alone is flailing context. The human alone is a slow pipeline with a tiny cache. Together, they cover every slot.

The colors show the data flow. The agent perceives a human prompt; it infers tokens onto the context window, for the human to perceive. The human, then discerns a few ideas from the window; consolidating them back into a prompt. The loop closes because the human outputs what the agent sees, and the agent outputs what the human sees. Recursion.

The A in AGI

The race to AGI assumed the “A” was the point. Strip it. G and I are what matter. The A is incidental. And if this is just human intelligence with tools; human intelligence alone is on the flatter side of the sigmoid. Not interesting.

A human learns at some rate. Constant. Add AI as a velocity booster, and the rate increases but stays constant. Faster output, same slope.

Close the loop. Now the rate of learning depends on what you’ve already learned. Each cycle sharpens the filter, tightens consolidation, and deepens perception. The rate itself increases. That’s the cusp.

But complementation doesn’t run itself. Using it as a velocity booster is a parlor trick, no better than model training — faster output, same human. The curve cups upward only if the human improves the human pipes: sharpening the filter means killing directions you’re attached to. Compressing consolidation means admitting what you thought was insight was noise. Developing intuition means being wrong long enough to recalibrate. It means feeding the blueprint into context expecting it to improve agent performance, watching it fail, and consolidating the failure as evidence here. Experimenting on one’s own cognition. That is metacognition.

Over iterations, courage compresses into judgment, judgment into intuition. Kaizen, hansei: sort, sweep, standardize. With each iteration purges misbeliefs, misjudgment, poor taste. This sharpens the slots your complement lacks. The structure provides the pipes. Courage opens the valves.

This is general in the way that matters: it adapts across time. The same complementation wrote The Natural Framework (philosophy), designed a diversity-aware search algorithm (information retrieval), built compilable prose for an ad exchange (mechanism design), and wrote the Double Loop post you’re reading the sequel to (pedagogy). Each project made the next one better because the human’s outer loop fed improved inputs into the next session.

The bound

Complementation is no more theoretically limited than the sci-fi version. Computation costs energy. Energy is finite. This bounds every intelligence the same. The hypothetically pure AGI and complementation face the same sigmoid. Nobody’s proven where either ceiling is. What matters is whether the curve bends at all.

But does it?

If it bends, it has an inflection point, where acceleration peaks before the ceiling constrains it. The mutable slots are human: filter, attend, consolidate. Improving them increases the rate. Metacognition. Without it, the curve stays linear. With it, it bends.

The composition is a type check: if the output type of one pipeline’s Transmit matches the input type of the other’s Perceive, they compose. This is a candidate functor between categories — the type match is necessary, but composition preservation must be verified. The vocabulary is due to Eilenberg and Mac Lane (1945); The Handshake gives the proof.

AI ∞ HI ∈ GI.

Complementation, recursed, is a general intelligence.

Written via the double loop.